Python Tricks: Master Advanced Coding Techniques for Better Performance

- Stop writing redundant loops with list comprehensions and generator expressions.

- Clean up your function logic using decorators and closures.

- Handle memory and performance bottlenecks with generators.

- Write cleaner, more maintainable code with unpacking and slicing tricks.

Writing Leaner Data Structures

One of the most common signs of a beginner is the "empty list and for-loop" pattern. You create a list, loop through a collection, check a condition, and append a value. While it works, it's slow and verbose. Professional developers use list comprehensions to handle this in one line. For example, if you're filtering a list of user IDs to find only those over 18, don't write five lines of code. A list comprehension does this by combining the loop and the condition into a single expression. This isn't just about saving keystrokes; it's actually faster because it's optimized at the C-level within the CPython interpreter. Beyond lists, you can use dictionary comprehensions to map keys to values instantly. Imagine you have a list of product names and you want to create a map of their lengths for a UI layout. Instead of a traditional loop, you can generate that dictionary in a single, readable line. It makes your code look intentional and clean.Mastering Function Power with Decorators

Sometimes you need to add functionality to a function without actually changing its internal code. This is exactly where Decorators come into play. A decorator is essentially a function that takes another function and extends its behavior without explicitly modifying it. Think about logging. If you have twenty different functions and you want to log how long each one takes to execute, you wouldn't want to manually add timing code to every single one. By creating a "timer" decorator, you can simply place@timer above your function definition. This separates the business logic (what the function does) from the administrative logic (how we monitor it).

This pattern is used heavily in frameworks like Flask for routing or in Django for permission checks. Once you understand that functions are first-class objects in Python-meaning they can be passed around like variables-decorators become a superpower for keeping your codebase DRY (Don't Repeat Yourself).

Memory Efficiency and Generators

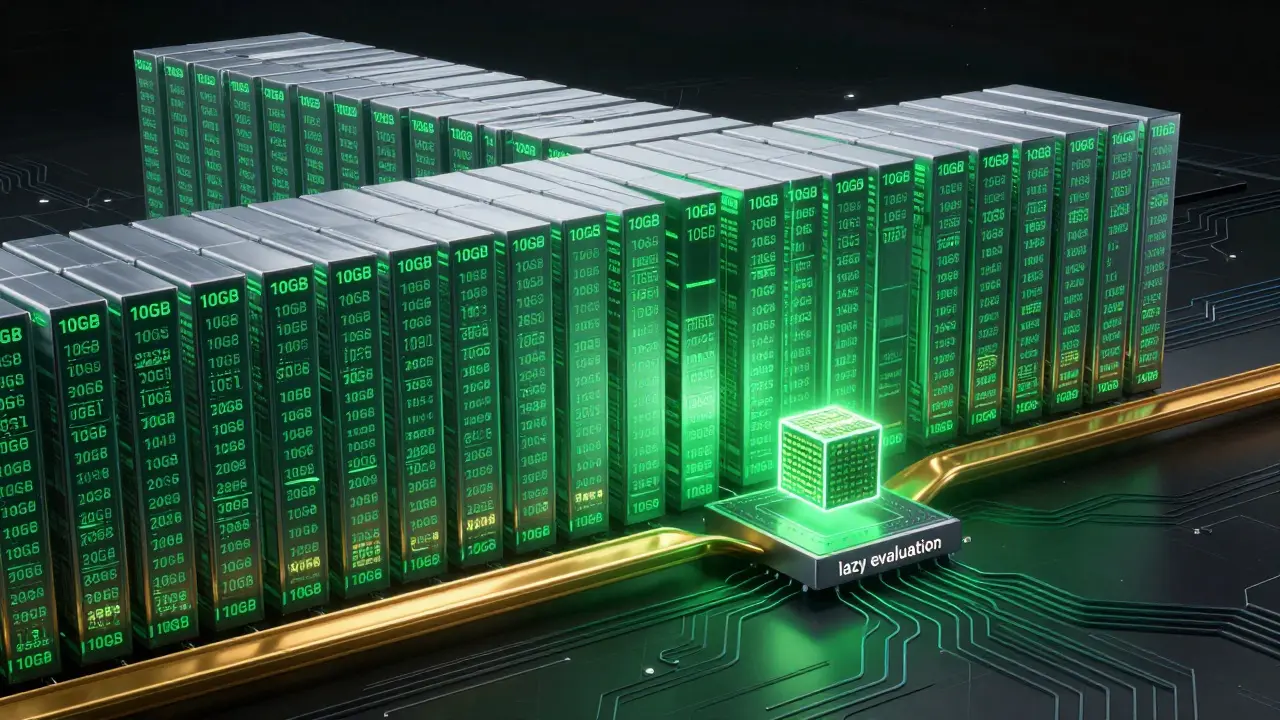

When you're dealing with massive datasets-like reading a 10GB log file-loading everything into a list will crash your program with a MemoryError. This is where Generators save the day. Unlike a list, which stores every element in RAM, a generator yields items one at a time only when requested. By replacing square brackets[] with parentheses () in a comprehension, you create a generator expression. If you're summing up a billion numbers, a list comprehension would allocate memory for every single number before summing them. A generator expression processes them one by one, keeping the memory footprint near zero regardless of the dataset size.

Another pro move is using the yield keyword. When a function uses yield, it becomes a generator. This allows you to maintain the state of a function call, pause it, and resume it later. This is incredibly useful for streaming data from an API where you don't know when the total response will arrive but want to start processing the first few chunks immediately.

| Feature | List Comprehension | Generator Expression | Traditional For-Loop |

|---|---|---|---|

| Memory Usage | High (stores all) | Very Low (lazy evaluation) | Moderate |

| Execution Speed | Fastest for small data | Fastest for large data | Slower |

| Syntax | Concise [x for x in y] |

Concise (x for x in y) |

Verbose |

| Return Type | List | Generator Object | None (manual append) |

Slicing and Unpacking Like a Pro

Python offers several "syntactic sugar" features that allow you to manipulate data with far less effort than in languages like Java or C++. One of the most powerful is extended iterable unpacking. If you have a list of values but only care about the first and last elements, you can use the asterisk* operator to capture everything in the middle into a separate list.

For example, if you're processing a CSV row where the first column is a timestamp and the last is a status code, you can write timestamp, *data, status = row. This instantly assigns the first item to timestamp, the last to status, and everything else into a list called data. No more calculating indices like row[1:-1].

Slicing is another area where pros excel. Beyond the basic [start:stop], using a step value [start:stop:step] allows for quick data manipulation. A classic trick to reverse a string or list is using [::-1]. While it looks cryptic to a beginner, it's the most efficient way to flip a sequence in Python because it's implemented in highly optimized C code.

Advanced Argument Handling

Writing flexible functions requires moving beyond simple positional arguments. Professional Pythonistas use*args and **kwargs to create functions that can handle any number of inputs. This is essential when building wrappers or APIs where the user might want to pass an arbitrary number of configuration options.

However, a more modern approach is using Keyword-Only Arguments. By placing an asterisk * in the function signature, you force the caller to specify the name of the argument. For instance, in a function like def create_user(*, username, email):, you cannot call it as create_user("john", "[email protected]"). You must call it as create_user(username="john", email="[email protected]"). This prevents bugs where a developer accidentally swaps two strings in a function call, which is a common nightmare in large-scale projects.

Furthermore, leveraging f-strings (introduced in Python 3.6) is no longer optional; it's the standard. Not only are they faster than .format() or %, but they also allow for inline expressions. You can perform math or call methods directly inside the curly braces, making your string formatting much more dynamic and easier to read.

Common Pitfalls to Avoid

Even pros make mistakes, but they avoid the ones that cause silent bugs. The most notorious is using a mutable default argument, likedef add_item(item, list=[]):. Because default arguments are evaluated only once when the function is defined, that list persists across every call to the function. If you add an item in one call, it will still be there in the next call, leading to bizarre data leakage.

Instead, always use None as the default and initialize the list inside the function. This ensures a fresh list is created for every single execution. It's a small detail, but it's the difference between a stable production app and one that crashes randomly in the middle of the night.

Another trap is using is when you mean ==. Remember that == checks for equality (do these two things have the same value?), while is checks for identity (are these two variables actually the same object in memory?). Using is for integer or string comparison might work for small values due to Python's internal string interning, but it will eventually fail as your data grows. Always use == for value comparison.

Are list comprehensions always faster than for-loops?

Generally, yes. List comprehensions are optimized at the C-level in the CPython interpreter, which reduces the overhead of calling the .append() method in every iteration. However, if the logic inside the loop becomes too complex (e.g., multiple nested loops or heavy conditional logic), a standard for-loop is often more readable and may perform similarly. The goal should be a balance between speed and maintainability.

When should I use a generator instead of a list?

Use a generator when you are dealing with large datasets that don't fit in memory, or when you only need to iterate through the data once. Generators are "lazy," meaning they produce values on the fly. If you need to access elements by index (e.g., my_list[5]) or if you need to iterate over the same collection multiple times, a list is the better choice because generators are exhausted after one full iteration.

How do I debug a decorator?

Debugging decorators can be tricky because they wrap the original function. The best way to handle this is by using functools.wraps. This utility ensures that the original function's metadata (like its name and docstring) is preserved. Without it, if you check my_function.__name__, it will return the name of the wrapper inside the decorator instead of the actual function name, making stack traces very confusing.

Is [::-1] the best way to reverse a string?

For most use cases, yes. Slicing is implemented in C and is incredibly fast. While the reversed() function also exists, it returns an iterator. If you need the result as a string, you'd have to join the iterator back together ("".join(reversed(s))), which is slower and more verbose than the slicing trick.

What is the impact of using *args and **kwargs on performance?

The performance hit is negligible for the vast majority of applications. The primary cost is the creation of a tuple (for args) and a dictionary (for kwargs) during the function call. The trade-off is almost always worth it for the flexibility and cleaner API design it provides, especially when building libraries or frameworks.

Next Steps for Leveling Up

If you've mastered these tricks, the next logical step is to explore theitertools and collections modules. These are standard library powerhouses that provide tools for complex iterations and specialized data structures like defaultdict and Counter, which replace a lot of manual dictionary logic.

For those moving into high-performance computing, look into asyncio for concurrent programming. Understanding how to write non-blocking code will allow you to handle thousands of simultaneous connections, which is a requirement for modern web scaling. Finally, start profiling your code using the cProfile module to see exactly where your bottlenecks are, rather than guessing where to optimize.