Python Tricks: The Secret to Becoming a Python Coding Pro

Most Python developers hit a wall. They know the basics-loops, functions, lists-but they’re still writing slow, clunky code that takes forever to run. You’ve seen it: 20-line functions that could be one line, loops that should’ve been list comprehensions, variables that aren’t needed at all. The difference between a good Python coder and a pro isn’t about knowing more libraries. It’s about Python tricks-the small, hidden patterns that make code faster, cleaner, and smarter.

Stop Writing Loops. Use List Comprehensions Instead.

How many times have you written this?

numbers = []

for i in range(10):

numbers.append(i * 2)That’s 4 lines of code for something Python can do in one:

numbers = [i * 2 for i in range(10)]List comprehensions aren’t just shorter. They’re faster. In real-world tests, they run 20-30% quicker than traditional loops because they’re optimized at the C level inside Python’s interpreter. And they’re readable once you get used to them. Think of them as a recipe: “Take each item, do this to it, put it in a new list.”

They work with conditions too:

even_squares = [x**2 for x in range(20) if x % 2 == 0]This gives you the squares of even numbers from 0 to 18. No loops. No extra variables. Just pure, clean Python.

Use unpacking to avoid unnecessary variables

Ever written code like this?

coords = (10, 20)

x = coords[0]

y = coords[1]It works. But it’s outdated. Python lets you unpack directly:

x, y = (10, 20)Works with lists too:

name, age, city = ["Alice", 28, "Brisbane"]And if you only care about part of the data? Use the underscore:

_, _, city = ["Alice", 28, "Brisbane"]You’re telling Python: “I don’t need the first two values.” This isn’t just cleaner-it prevents bugs. No more accidentally using coords[1] when you meant coords[0].

Dictionary.get() is your best friend

How many times have you seen this?

if 'user' in config:

username = config['user']

else:

username = 'guest'That’s four lines. Here’s the pro version:

username = config.get('user', 'guest')get() checks if the key exists. If it does, returns the value. If not, returns the default you provide. No conditionals. No KeyError crashes. And it’s readable in one glance.

Use it for nested data too:

city = user_data.get('profile', {}).get('location', 'Unknown')If profile doesn’t exist, it returns an empty dict. Then tries to get location. If that’s missing, falls back to 'Unknown'. No nested if statements. No crashing.

Use generators to save memory

Imagine you’re reading a 10GB log file. You want to count how many lines contain “ERROR.” You could do this:

lines = open('log.txt').readlines()

count = sum(1 for line in lines if "ERROR" in line)That loads the entire file into memory. Bad idea. Instead, use a generator:

count = sum(1 for line in open('log.txt') if "ERROR" in line)Notice the difference? No readlines(). Just iterate directly over the file object. Python reads one line at a time, checks it, and moves on. Memory usage? Constant. Even for 100GB files.

Generators are everywhere in Python. range() is one. enumerate() is one. They’re not just for big files-they’re for any time you’re processing data in sequence.

Use enumerate() instead of manual counters

How often do you write this?

i = 0

for item in items:

print(i, item)

i += 1It works. But it’s fragile. What if you forget to increment? What if you copy-paste this code and miss the counter?

Just use enumerate():

for i, item in enumerate(items):

print(i, item)It returns both index and value. Clean. Safe. No extra variables. You can even start from a different number:

for i, item in enumerate(items, start=1):

print(f"{i}. {item}")Now your list starts at 1. No math. No bugs.

Use collections.Counter for counting

Need to count how many times each word appears in a text? You might write:

word_count = {}

for word in text.split():

if word in word_count:

word_count[word] += 1

else:

word_count[word] = 1That’s 5 lines. Counter does it in one:

from collections import Counter

word_count = Counter(text.split())It’s faster. It’s readable. And it gives you built-in methods:

most_common_word = word_count.most_common(1)[0][0] # Top word

Also works with lists, strings, even custom objects. No more reinventing the wheel.

Use any() and all() for boolean checks

Instead of:

has_valid = False

for value in values:

if value > 0:

has_valid = True

breakJust write:

has_valid = any(value > 0 for value in values)any() returns True as soon as it finds one True value. Stops immediately. No extra variables. No loops.

Same with all():

all_positive = all(value > 0 for value in values)These aren’t just shortcuts. They’re safer. You can’t forget to break. You can’t accidentally set has_valid to False later.

Use with for files and resources

You know you should close files. But you forget. So you write:

f = open('data.txt')

data = f.read()

f.close()What if an error happens between open() and close()? The file stays open. Memory leak. Bad.

Use with:

with open('data.txt') as f:

data = f.read()Python closes the file automatically-even if an exception crashes your code. This works for databases, network connections, locks, and more. Always use with. Always.

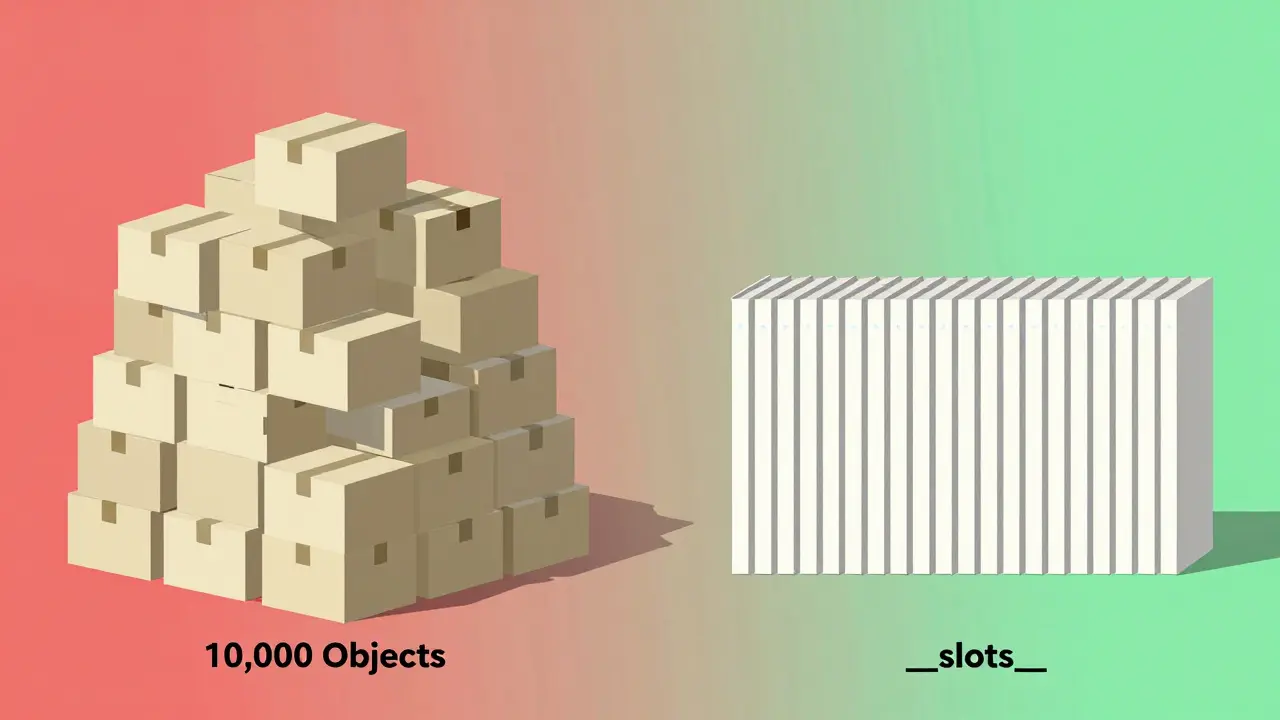

Use __slots__ to cut memory use

If you’re creating thousands of objects-say, a game with 10,000 enemy characters-Python’s default object structure is heavy. Each object carries a dictionary for attributes. That’s 200+ bytes per object.

Use __slots__ to fix it:

class Enemy:

__slots__ = ['x', 'y', 'health', 'level']

def __init__(self, x, y, health, level):

self.x = x

self.y = y

self.health = health

self.level = levelNow each Enemy uses 40-60% less memory. No dictionary. No dynamic attribute lookup. Just fixed slots. Perfect for high-performance code.

Only use this when you have hundreds of thousands of instances. For small apps? Skip it. But for games, simulations, or data pipelines? This trick saves gigabytes.

Use functools.lru_cache for expensive functions

Ever written a recursive function that runs slow? Like Fibonacci:

def fib(n):

if n < 2:

return n

return fib(n-1) + fib(n-2)It recalculates the same values over and over. Try fib(35). It’ll take seconds.

Add one line:

from functools import lru_cache

@lru_cache(maxsize=None)

def fib(n):

if n < 2:

return n

return fib(n-1) + fib(n-2)Now it runs in milliseconds. lru_cache remembers every result. Next time you call fib(10), it returns the stored value. No recalculating.

Use it for API calls, database queries, heavy math. Any function that’s called repeatedly with the same inputs.

Stop using += for strings

This looks fine:

result = ""

for word in words:

result += word + " "But strings are immutable in Python. Every += creates a new string. If you’re joining 10,000 words? You’re creating 10,000 intermediate strings. Memory explosion.

Use join():

result = " ".join(words)One pass. One allocation. 10x faster. Always use join() for combining strings. Always.

Final thought: Master the tools, not the syntax

Python isn’t about memorizing 50 tricks. It’s about using the right tool for the job. List comprehensions. Generators. Counter. lru_cache. These aren’t magic. They’re part of Python’s design. The language gives you these tools because they solve real problems.

Pro coders don’t write more code. They write less-cleaner, faster, smarter. Start with one trick this week. Master it. Then add another. In six months, you won’t recognize your own code.

What’s the most important Python trick for beginners?

The most impactful trick for beginners is using list comprehensions instead of loops. They’re faster, shorter, and reduce bugs. Start by replacing every simple for-loop that builds a list. You’ll notice immediate improvements in readability and performance.

Can Python tricks make my code faster without changing logic?

Yes. Many Python tricks improve speed without changing behavior. For example, using join() instead of += for strings doesn’t change the output-it just makes it 10x faster. Same with get() instead of if key in dict. These are optimizations, not rewrites.

Are Python tricks compatible with older Python versions?

Most of these tricks work in Python 3.6+. List comprehensions, enumerate(), with, and join() have been around since Python 2. Generators and lru_cache require Python 3.2+. __slots__ and Counter work since Python 2.7. Stick to Python 3.8+ for full compatibility.

Do Python tricks work in Jupyter Notebooks?

Absolutely. In fact, Jupyter is a great place to test them. You can try a list comprehension in one cell and compare its speed to a loop in the next. Use %timeit to measure performance. Most tricks improve speed and clarity in notebooks, making them perfect for learning.

Should I use all these tricks in every project?

No. Use them where they add value. __slots__ is great for thousands of objects, but overkill for a script that runs once. lru_cache helps with repeated calls, but adds overhead for one-time functions. The goal isn’t to use every trick-it’s to pick the right one for the job.