Python for AI: Why It Is the Pillar of Modern Technology

Why Python Dominates Artificial Intelligence

You might wonder why Python is the undisputed leader in artificial intelligence development when faster languages like C++ or Rust exist. The answer isn’t raw speed-it’s ecosystem velocity. In 2026, over 80% of new AI models are prototyped in Python before being optimized for production. This isn’t a coincidence; it’s the result of two decades of strategic library development that prioritizes developer experience over micro-optimizations.

The core problem with other languages in AI is fragmentation. You spend weeks configuring compilers and managing memory manually. With Python, you import a library and start training. That simplicity allows researchers to iterate ten times faster than their counterparts using lower-level languages. Speed of iteration beats speed of execution when you’re trying to discover what works.

Key Takeaways

- Python’s dominance comes from its rich ecosystem (NumPy, PyTorch, TensorFlow), not raw computational speed.

- Dynamic typing and readability reduce cognitive load, allowing developers to focus on model architecture rather than syntax errors.

- Community-driven open-source contributions ensure rapid updates and bug fixes for critical AI libraries.

- Integration with C/C++ backends gives Python the best of both worlds: ease of use and high performance.

The Library Ecosystem: The Real Engine of AI

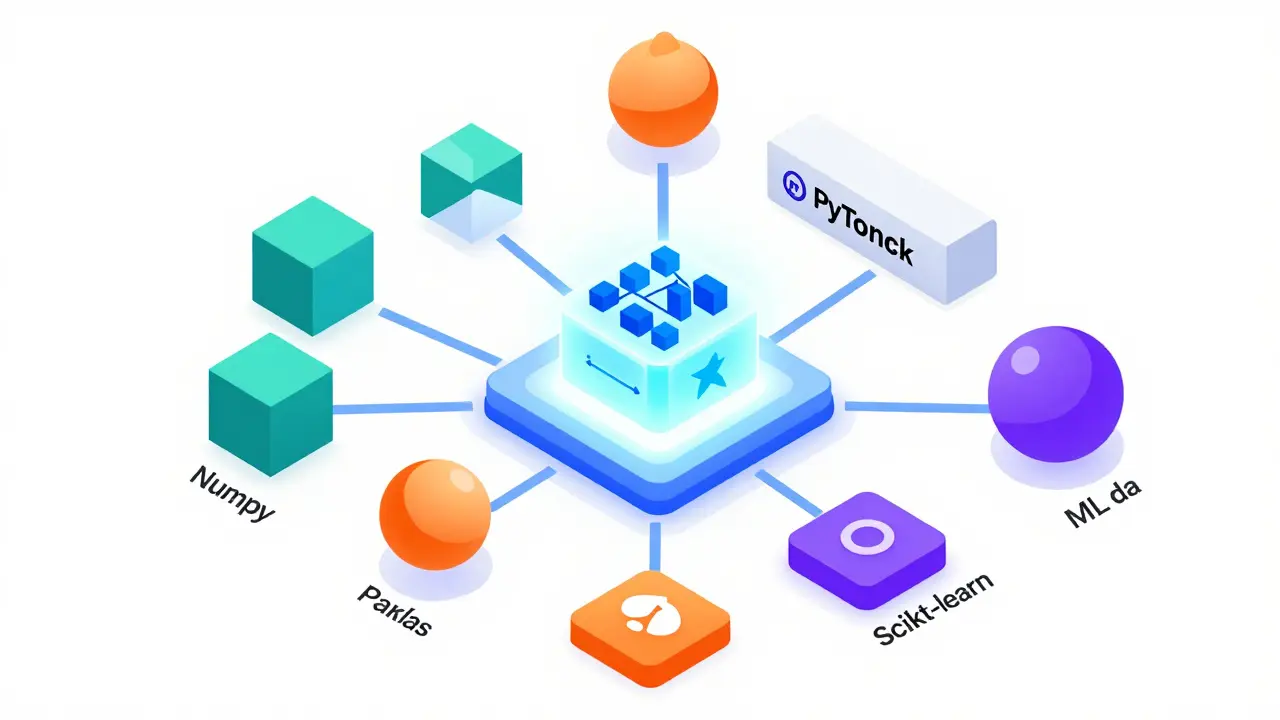

The real power of Python for AI lies in its comprehensive suite of specialized libraries. These tools handle everything from data preprocessing to neural network deployment. Without this stack, Python would just be another scripting language.

NumPy is the foundation of numerical computing in Python. It provides support for large, multi-dimensional arrays and matrices, along with a collection of mathematical functions to operate on these arrays. Before NumPy, doing matrix multiplication in Python was painfully slow. Now, it runs at near-C speeds because the heavy lifting happens in compiled code underneath.

For deep learning, you have two giants: PyTorch is a flexible deep learning framework developed by Meta and TensorFlow is Google’s scalable machine learning platform. PyTorch has gained favor among researchers for its dynamic computation graph, which makes debugging intuitive. TensorFlow remains strong in production environments due to its robust deployment tools like TensorFlow Serving. Most teams today use PyTorch for research and export to ONNX or TensorRT for deployment.

Data manipulation relies heavily on Pandas is a powerful data analysis and manipulation tool. It turns raw CSV files into structured DataFrames, allowing you to clean, filter, and transform data with simple commands. Combined with Matplotlib is a plotting library for creating static, animated, and interactive visualizations, you can visualize your dataset’s distribution in seconds. This quick feedback loop is crucial for spotting anomalies before they ruin your model.

| Library | Primary Function | Key Advantage | Best Use Case |

|---|---|---|---|

| NumPy | Numerical Computing | High-performance array operations | Mathematical foundations, linear algebra |

| PyTorch | Deep Learning Framework | Dynamic graphs, easy debugging | Research, prototype development |

| TensorFlow | Machine Learning Platform | Scalability, production deployment | Enterprise applications, mobile AI |

| Pandas | Data Manipulation | Intuitive data cleaning | Preprocessing tabular data |

| Scikit-learn | Traditional ML Algorithms | Simplicity, consistency | Classification, regression, clustering |

Readability and Developer Experience

Code readability directly impacts innovation speed. Python’s syntax forces clarity. Indentation matters, variable names should be descriptive, and complex logic is often broken into small functions. This structure reduces bugs and makes collaboration easier.

Compare writing a neural network layer in C++ versus Python. In C++, you manage memory allocation, pointer arithmetic, and compiler flags. In Python, you define a class inheriting from `nn.Module` and override the forward method. The abstraction hides complexity without sacrificing control. This is why junior developers can contribute to AI projects within weeks, not months.

Dynamic typing also plays a role. You don’t need to declare variable types upfront. This flexibility speeds up experimentation. When testing different model architectures, you can swap components without rewriting type definitions. Static-typed languages require more boilerplate, which slows down the trial-and-error process essential in AI research.

Performance Optimization Strategies

Critics often cite Python’s Global Interpreter Lock (GIL) as a weakness. While true for CPU-bound tasks, AI workloads bypass this limitation through clever design. Libraries like NumPy and PyTorch release the GIL during intensive computations, allowing true parallelism.

For maximum performance, consider these strategies:

- Use GPU Acceleration: PyTorch and TensorFlow automatically offload tensors to NVIDIA GPUs via CUDA. This provides 10-100x speedups for matrix multiplications.

- Leverage JIT Compilation: TorchScript compiles PyTorch models into an optimized format that runs faster than standard Python interpretation.

- Employ Vectorization: Avoid loops in NumPy and Pandas. Use built-in vectorized operations that run in C under the hood.

- Profile Your Code: Tools like cProfile identify bottlenecks. Often, I/O operations, not computation, slow down pipelines.

If you absolutely need raw speed for specific functions, write them in Cython or Numba. These tools compile Python-like code to C, bridging the gap between ease of use and performance. Most teams reserve this for hotspots identified through profiling.

Community and Open Source Culture

The strength of any technology depends on its community. Python benefits from one of the largest developer communities globally. This means extensive documentation, countless tutorials, and active forums like Stack Overflow and GitHub.

When you encounter an error, someone else has likely solved it already. Search engines return relevant solutions instantly. This collective knowledge base reduces downtime significantly. Companies invest in Python because hiring skilled developers is easier than finding experts in niche languages.

Open source licensing further accelerates adoption. Major tech companies contribute back to the ecosystem. Google maintains TensorFlow, Meta supports PyTorch, and Hugging Face leads the natural language processing revolution with Transformers. This collaboration ensures continuous improvement and stability.

Real-World Applications Beyond Research

Python isn’t just for academic papers. It powers real-world systems across industries. In healthcare, AI models predict patient outcomes using electronic health records processed by Pandas. In finance, algorithmic trading systems execute trades based on patterns detected by Scikit-learn classifiers.

Autonomous vehicles rely on computer vision libraries like OpenCV integrated with deep learning frameworks. Self-driving cars process millions of frames per second, identifying pedestrians and obstacles. All this starts with Python scripts defining the model architecture.

E-commerce platforms use recommendation engines built on collaborative filtering algorithms. These systems analyze user behavior to suggest products, increasing sales and engagement. The backend logic is written in Python, deployed via Flask or FastAPI APIs.

Future Trends: Where Python Goes Next

As AI evolves, so does Python. Emerging trends include:

- Automated Machine Learning (AutoML): Tools like AutoKeras automate hyperparameter tuning and model selection, making AI accessible to non-experts.

- Federated Learning: Frameworks enable training models across decentralized devices while preserving privacy. Python serves as the orchestration layer.

- Explainable AI (XAI): Libraries like SHAP help interpret black-box models, crucial for regulated industries like banking and insurance.

- Edge AI Deployment: Converting Python models to lightweight formats for IoT devices using tools like TensorFlow Lite.

Type hinting adoption continues to grow. Adding type hints improves code maintainability and enables better IDE support. MyPy checks types statically, catching errors before runtime. This trend suggests Python will become even more robust for large-scale enterprise applications.

Is Python too slow for production AI systems?

Not necessarily. While pure Python is slower than C++, most AI libraries delegate heavy computation to optimized C/C++ backends. For inference, models are often exported to formats like ONNX or TensorRT, which run efficiently on hardware accelerators. The bottleneck usually shifts to data loading and preprocessing, where Python excels due to its rich ecosystem.

Should I learn PyTorch or TensorFlow first?

Start with PyTorch if you’re interested in research or want an intuitive learning curve. Its dynamic graph makes debugging straightforward. Choose TensorFlow if you plan to deploy models in enterprise environments or work extensively with mobile/edge devices. Both share similar concepts, so switching later is manageable.

How do I optimize my Python AI code for speed?

First, profile your code to find bottlenecks. Then, replace loops with vectorized NumPy/Pandas operations. Enable GPU acceleration for deep learning tasks. Consider using Numba for numerical computations or Cython for custom extensions. Finally, ensure efficient data pipelines using generators instead of loading entire datasets into memory.

Can Python handle big data effectively?

Yes, through libraries like Dask and Apache Spark. Dask extends Pandas functionality to distributed clusters, handling datasets larger than RAM. Spark integrates seamlessly with Python via PySpark, enabling scalable data processing. For extremely large datasets, partitioning and streaming approaches keep memory usage low.

What are the main security concerns with Python in AI?

Model poisoning and adversarial attacks pose significant risks. Always validate input data rigorously. Use sandboxed environments for untrusted code execution. Keep dependencies updated to patch vulnerabilities. Additionally, monitor model drift in production to detect unexpected behavior early. Secure API endpoints serving AI predictions against unauthorized access.